Sample program that can send the recognition data from HVC-P2 to Fujitsu IoT Platform using REST (HTTP)

Dependencies: AsciiFont GR-PEACH_video GraphicsFramework LCD_shield_config R_BSP USBHost_custom easy-connect-gr-peach mbed-http picojson

Information

Here are both English and Japanese description. First, English description is shown followed by Japanese one. 本ページには英語版と日本語版の説明があります。まず英語版、続いて日本語版の説明となります。

Overview

This sample program shows how to send the cognitive data gathered by Omron HVC-P2 (Human Vision Components B5T-007001) to IoT Platform managed by FUJITSU ( http://jp.fujitsu.com/solutions/cloud/k5/function/paas/iot-platform/ ).

Hardware Configuration

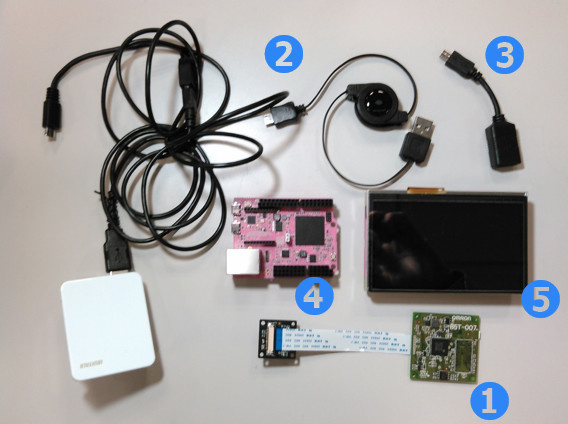

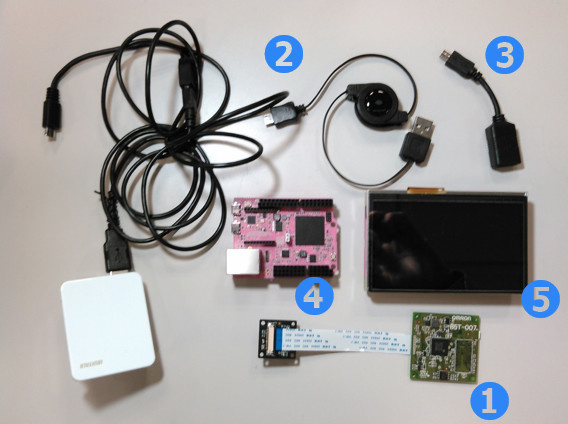

- GR-PEACH 1 set ( https://developer.mbed.org/platforms/Renesas-GR-PEACH/ )

- LCD Shield 1 set ( https://developer.mbed.org/teams/Renesas/Wiki/LCD-shield )

- HVC-P2 1 set ( Human Vision Components B5T-007001 ) ( https://plus-sensin.omron.com/product/B5T-007001/ )

- USBA - Micro USB Cable 2 sets

- USBA (Female) - Micro USB (Male) Adapter 1 set

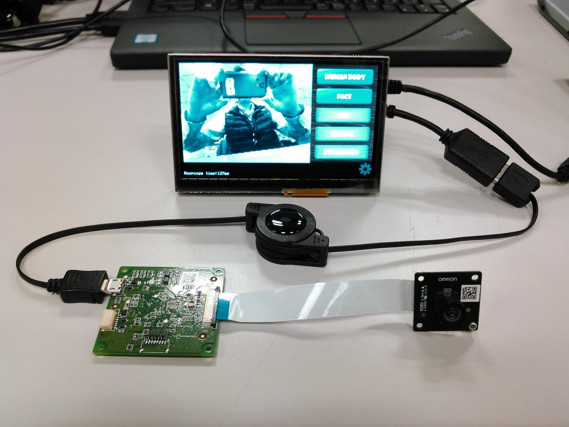

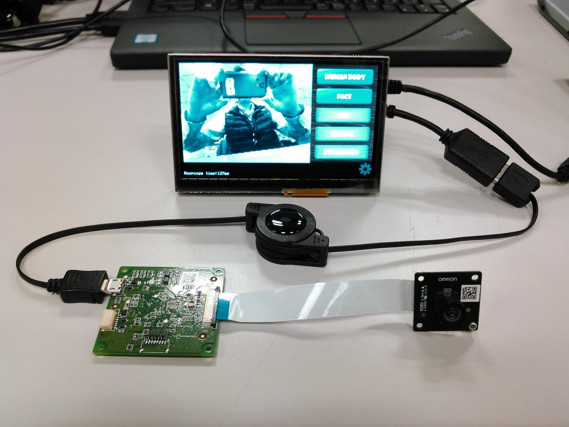

When executing this sample program, please configure the above H/W as shown below:

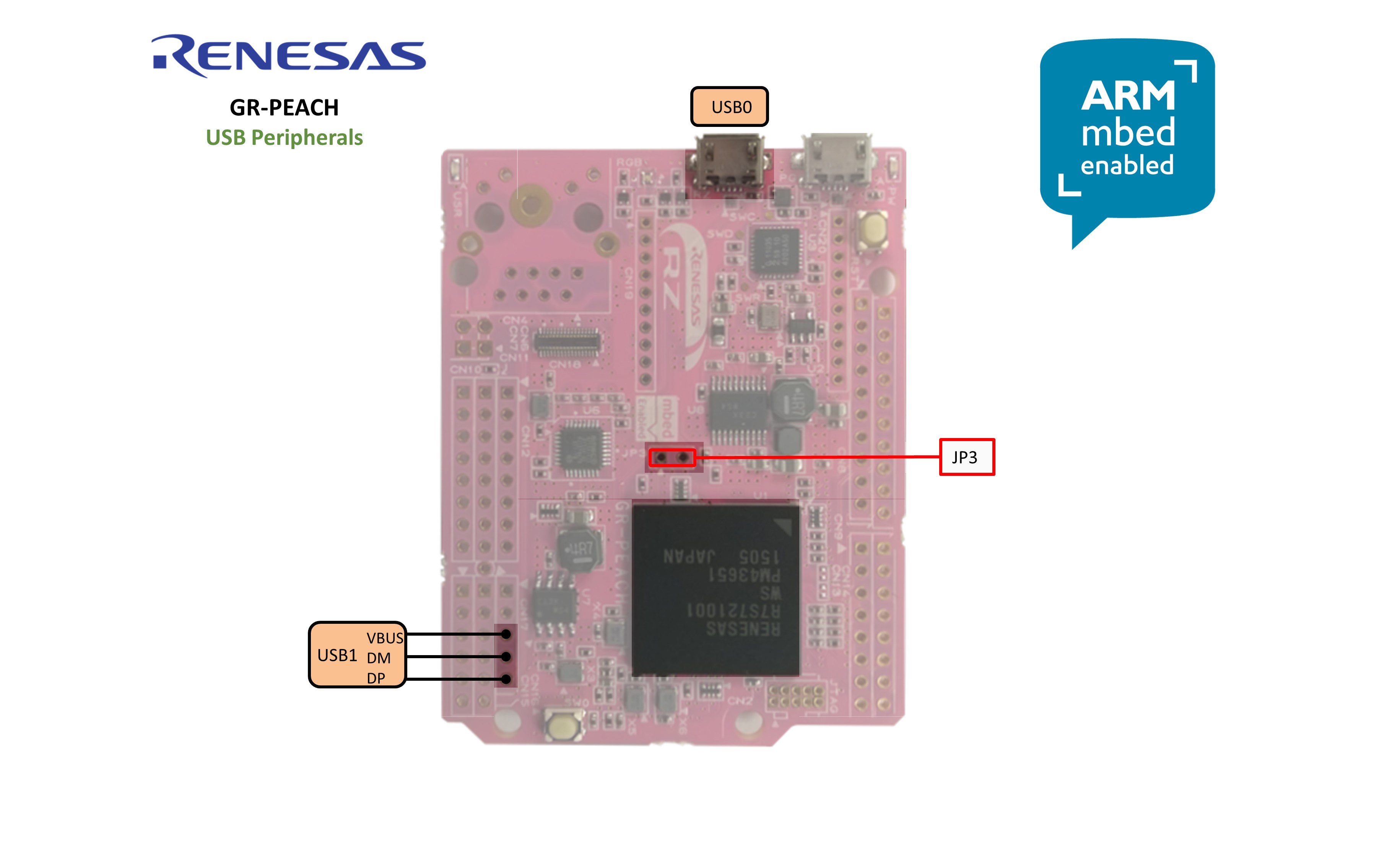

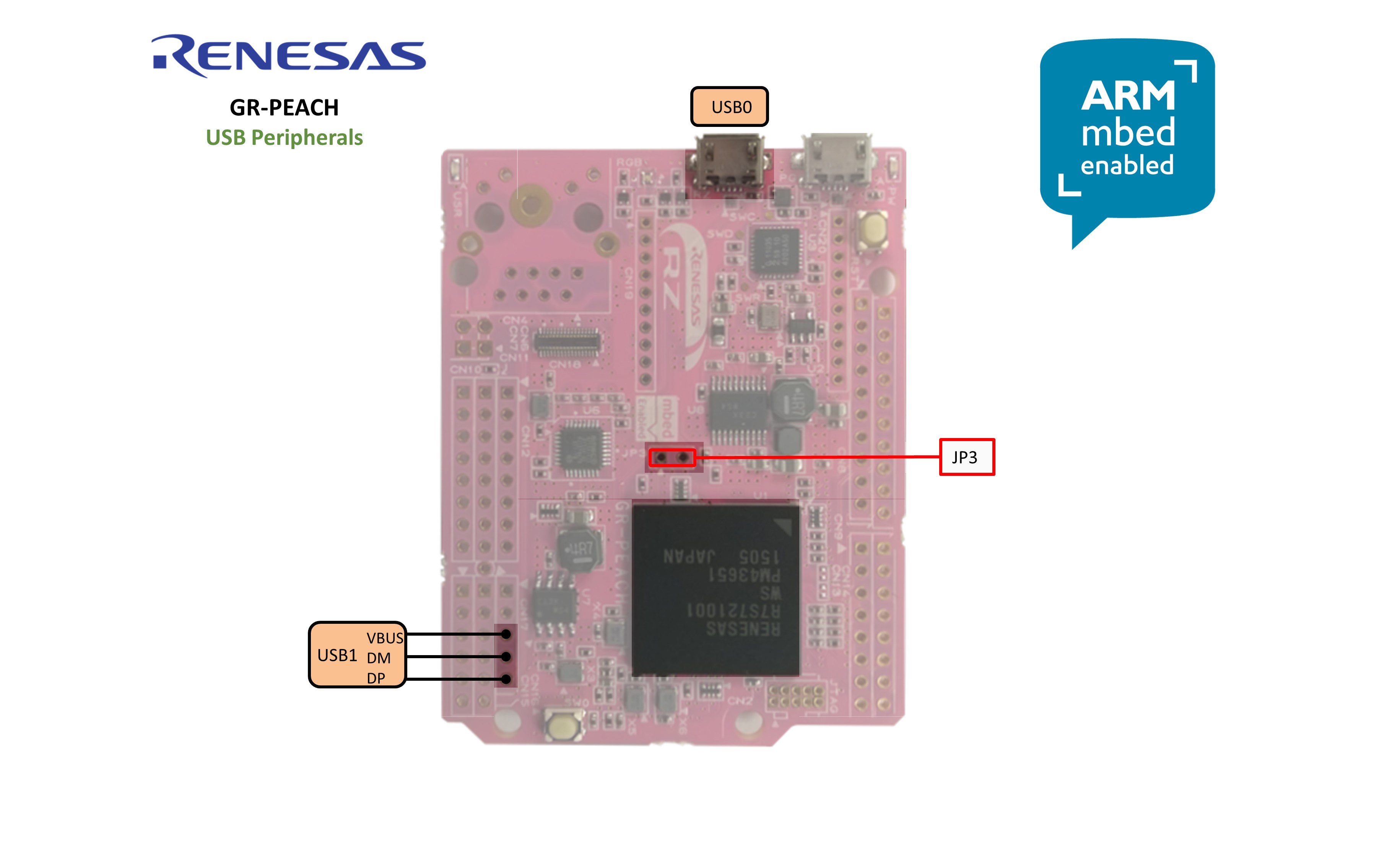

Also, please close JP3 of GR-PEACH as follows:

Application Preconfiguration

- Configure Ethernet settings. For details, please refer to the following link:

https://developer.mbed.org/teams/Renesas/code/GR-PEACH_IoT_Platform_HTTP_sample/wiki/Ethernet-settings - On IoT Platform, set up the resource and its access code where the gathered data would be stored. For details on resource and access code, please refer to the following links:

https://iot-docs.jp-east-1.paas.cloud.global.fujitsu.com/en/manual/userguide_en.pdf

https://iot-docs.jp-east-1.paas.cloud.global.fujitsu.com/en/manual/apireference_en.pdf

https://iot-docs.jp-east-1.paas.cloud.global.fujitsu.com/en/manual/portalmanual_en.pdf

Build Procedure

- Import this sample program onto mbed Compiler

- In GR-PEACH_HVC-P2_IoTPlatform_http/IoT_Platform/iot_platform.cpp, please replace <ACCESS CODE> with the access code you set up on IoT Platform. For details on how to set up Access Code, please refer to the above Application Setup. Then, please delete the line beginning with #error macro.

Access Code configuration

#define ACCESS_CODE <Access CODE> #error "You need to replace <Access CODE for your resource> with yours"

- In GR-PEACH_HVC-P2_IoTPlatform_http/IoT_Platform/iot_platform.cpp, please replace <Base URI>, <Tenant ID> and <Path-to-Resource> below with yours and delete the line beginning with #error macro. For details on <Base URI> and <Tenant ID>, please contact FUJITSU LIMITED. Also, for details on <Path-to-Resource>, please refer to the above Application Setup.

URI configuration

std::string put_uri_base("<Base URI>/v1/<Tenant ID>/<Path-to-Resource>.json");

#error "You need to replace <Base URI>, <Tenant ID> and <Path-to-Resource> with yours"

**snip**

std::string get_uri("<Base URI>/v1/<Tenant ID>/<Path-to-Resource>/_past.json");

#error "You need to replace <Base URI>, <Tenant ID> and <Path-to-Resource> with yours"

- Compile the program. If compilation is successfully terminated, the binary should be downloaded on your PC.

- Plug Ethernet cable into GR-PEACH

- Plug micro-USB cable into the port which lies on the next to RESET button. If GR-PEACH is successfully recognized, it appears as the USB flash disk named mbed as show below:

- Copy the downloaded binary to mbed drive

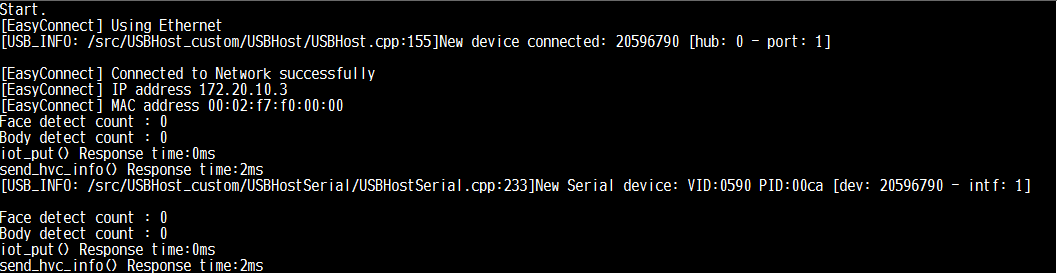

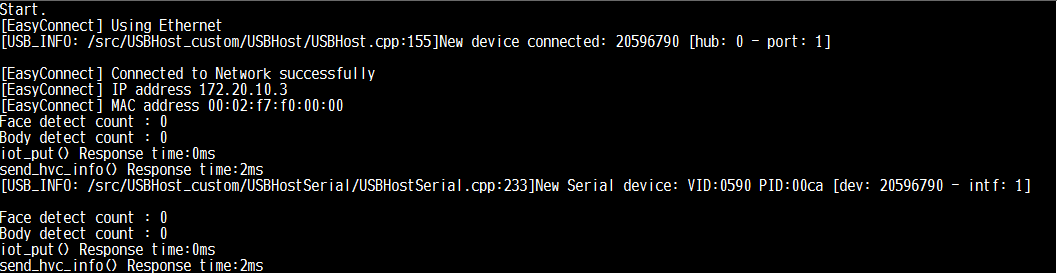

- Press RESET button on GR-PEACH in order to run the program. If it's successfully run, you can see the following message on terminal:

Format of Data to be sent to IoT Platform

In this program, the cognitive data sent from HVC-P2 is serialized in the following JSON format and send it to IoT Platform:

- Face detection data

{

"RecodeType": "HVC-P2(face)"

"id": <GR-PEACH ID>-<Sensor ID>"

"FaceRectangle": {

"Height": xxxx,

"Left": xxxx,

"Top": xxxx,

"Width": xxxx,

},

"Gender": "male" or "female",

"Scores": {

"Anger": zzz,

"Hapiness": zzz,

"Neutral": zzz,

"Sadness": zzz,

"Surprise": zzz

}

}

xxxx: Top-left coordinate, width and height of the rectangle circumscribing the detected face in LCD display coordinate system

zzz: the value indicating the expression estimated from the detected face

//

- Body detection data

{

"RecodeType": "HVC-P2(body)"

"id": <GR-PEACH ID>-<Sensor ID>"

"BodyRectangle": {

"Height": xxxx,

"Left": xxxx,

"Top": xxxx,

"Width": xxxx,

}

}

xxxx: Top-left coordinate, width and height of the rectangle circumscribing the detected body in LCD display coordinate system

概要

本プログラムは、オムロン社製HVC-P2 (Human Vision Components B5T-007001)で収集した各種認識データを、富士通社のIoT Platform ( http://jp.fujitsu.com/solutions/cloud/k5/function/paas/iot-platform/ ) に送信するサンプルプログラムです。

ハードウェア構成

- GR-PEACH 1式 ( https://developer.mbed.org/platforms/Renesas-GR-PEACH/ )

- LCD Shield 1式 ( https://developer.mbed.org/teams/Renesas/Wiki/LCD-shield )

- HVC-P2 1式 ( Human Vision Components B5T-007001 ) ( https://plus-sensin.omron.com/product/B5T-007001/ )

- USBA - Micro USBケーブル 2式

- USBA (メス) - Micro USB (オス)変換アダプタ 1式

本プログラムを動作させる場合、上記H/Wを下図のように接続してください。

また下図に示すGR-PEACHのJP3をショートしてください。

アプリケーションの事前設定

- Ethernetの設定を行います。詳細は下記リンクを参照ください。

https://developer.mbed.org/teams/Renesas/code/GR-PEACH_IoT_Platform_HTTP_sample/wiki/Ethernet-settings - HVC-P2で収集したデータを格納するリソース、およびそのアクセスコードをIoT Platform上で設定します。詳細は下記リンクを参照ください。

https://iot-docs.jp-east-1.paas.cloud.global.fujitsu.com/en/manual/userguide_en.pdf https://iot-docs.jp-east-1.paas.cloud.global.fujitsu.com/en/manual/apireference_en.pdf https://iot-docs.jp-east-1.paas.cloud.global.fujitsu.com/en/manual/portalmanual_en.pdf

ビルド手順

- 本サンプルプログラムをmbed Compilerにインポートします

- 下記に示すGR-PEACH_HVC-P2_IoTPlatform_http/IoT_Platform/iot_platform.cpp中の<ACCESS CODE> を、IoT Platform上で設定したアクセスコードで上書きしてください。<Access Code>の設定方法につきましては、上述のApplication Setupを参照願います。また #errorマクロで始まる行を削除してください。

Access Code configuration

#define ACCESS_CODE <Access CODE> #error "You need to replace <Access CODE for your resource> with yours"

- 下記に示すGR-PEACH_HVC-P2_IoTPlatform_http/IoT_Platform/iot_platform.cpp中の<Base URI>と <Tenant ID>、および<Path-to-Resource>>を適当な値に置換えるとともに、#errorマクロで始まる行を削除してください。ここで、<Base URI>、 <Tenant ID>の詳細につきましては富士通社へご確認願います。また<Path-to-Resource>>につきましては、Application Setupの項を参照ください。

URI configuration

std::string put_uri_base("<Base URI>/v1/<Tenant ID>/<Path-to-Resource>.json");

#error "You need to replace <Base URI>, <Tenant ID> and <Path-to-Resource> with yours"

(中略)

std::string get_uri("<Base URI>/v1/<Tenant ID>/<Path-to-Resource>/_past.json");

#error "You need to replace <Base URI>, <Tenant ID> and <Path-to-Resource> with yours"

- プログラムをコンパイルします。コンパイルが正常終了すると、バイナリがお使いのPCにダウンロードされます。

- GR-PEACHのRJ-45コネクタにEthernetケーブルを差し込んでください。

- USBA - Micro USBケーブルを、GR-PEACHのRESETボタンの隣に配置されたMicro USBポートに差し込んでください。GR-PEACHが正常に認識されると、下図に示すようにGR-PEACHがmbedという名称のUSBドライブとして認識されます。

- ダウンロードしたバイナリをmbedドライブにコピーします。

- RESETボタンを押下してプログラムを実行します。正常に実行された場合、下記に示すメッセージがターミナル上に表示されます。

送信データフォーマット

本プログラムでは、HVC-P2が収集した認識データを下記のJSONフォーマットにシリアライズし、IoT Platformへ送信します。

- Face detection data

{

"RecodeType": "HVC-P2(face)"

"id": <GR-PEACH ID>-<Sensor ID>"

"FaceRectangle": {

"Height": xxxx,

"Left": xxxx,

"Top": xxxx,

"Width": xxxx,

},

"Gender": "male" or "female",

"Scores": {

"Anger": zzz,

"Hapiness": zzz,

"Neutral": zzz,

"Sadness": zzz,

"Surprise": zzz

}

}

xxxx: LCD表示座標系における検出した顔に外接する矩形の左上座標・幅・高さ

zzz: 検出した顔から推定した各種感情を示す数値

//

- Body detection data

{

"RecodeType": "HVC-P2(body)"

"id": <GR-PEACH ID>-<Sensor ID>"

"BodyRectangle": {

"Height": xxxx,

"Left": xxxx,

"Top": xxxx,

"Width": xxxx,

}

}

xxxx: LCD表示座標系における検出した人体に外接する矩形の左上座標・幅・高さ

IoT_Platform/iot_platform.cpp

- Committer:

- Osamu Nakamura

- Date:

- 2017-09-07

- Revision:

- 0:813a237f1c50

File content as of revision 0:813a237f1c50:

#include "mbed.h"

#include "picojson.h"

#include "iot_platform.h"

#include <string>

#include <iostream>

#include <vector>

#include "easy-connect.h"

#include "http_request.h"

#include "NTPClient.h"

#include <time.h>

/* Detect result */

result_hvcp2_fd_t result_hvcp2_fd[DETECT_MAX];

result_hvcp2_bd_t result_hvcp2_bd[DETECT_MAX];

uint32_t result_hvcp2_bd_cnt;

uint32_t result_hvcp2_fd_cnt;

Timer http_resp_time; // response time

uint16_t data[4]; //for color data

#define JST_OFFSET 9

#define ACCESS_CODE "Bearer <ACCESS CODE>"

#error "You have to replace the above <ACCESS CODE> with yours"

std::string put_uri_base("<Base URI>/v1/<Tenant ID>/<Path-to-Resource>.json");

#error "You have to replace <Base URI>, <Tenant ID> and <Path-to-Resource> with yours"

std::string put_uri;

// json-object for camera

picojson::object o_bd[DETECT_MAX], o_fd[DETECT_MAX], o_fr[DETECT_MAX], o_scr[DETECT_MAX];

// json-object for sensor

picojson::object o_acc, o_atmo, o_col, o_temp;

picojson::array data_array_acc(6);

picojson::array data_array_atmo(6);

picojson::array data_array_col(6);

picojson::array data_array_temp(6);

// URI for GET request

std::string get_uri("<Base URI>/v1/<Tenant ID>/<Path-to-Resource>/_past.json");

#error "You have to replace <Base URI>, <Tenant ID> and <Path-to-Resource> with yours"

void dump_response(HttpResponse* res)

{

DEBUG_PRINT("Status: %d - %s\n", res->get_status_code(),

res->get_status_message().c_str());

DEBUG_PRINT("Headers:\n");

for (size_t ix = 0; ix < res->get_headers_length(); ix++) {

DEBUG_PRINT("\t%s: %s\n", res->get_headers_fields()[ix]->c_str(),

res->get_headers_values()[ix]->c_str());

}

DEBUG_PRINT("\nBody (%d bytes):\n\n%s\n", res->get_body_length(),

res->get_body_as_string().c_str());

}

std::string create_put_uri(std::string uri_base)

{

time_t ctTime;

struct tm *pnow;

char date_and_hour[50];

std::string uri;

ctTime = time(NULL);

pnow = localtime(&ctTime);

sprintf(date_and_hour, "?$date=%04d%02d%02dT%02d%02d%02d.000%%2B%02d00",

(pnow->tm_year + 1900), (pnow->tm_mon + 1), pnow->tm_mday,

(pnow->tm_hour + JST_OFFSET - pnow->tm_isdst), pnow->tm_min,

pnow->tm_sec, (JST_OFFSET - pnow->tm_isdst));

uri = uri_base + date_and_hour;

return(uri);

}

int iot_put(NetworkInterface *network, picojson::object o4)

{

#ifdef ENABLED_NTP

put_uri = create_put_uri(put_uri_base);

#else

put_uri = put_uri_base;

#endif // ENABLED_NTP

// PUT request to IoT Platform

HttpRequest* put_req = new HttpRequest(network, HTTP_PUT, put_uri.c_str());

put_req->set_header("Authorization", ACCESS_CODE);

picojson::value v_all(o4);

std::string body = v_all.serialize();

HttpResponse* put_res = put_req->send(body.c_str(), body.length());

if (!put_res) {

DEBUG_PRINT("HttpRequest failed (error code %d)\n", put_req->get_error());

return 1;

}

delete put_req;

return 0;

}

int iot_get(NetworkInterface *network)

{

// Do GET request to IoT Platform

// By default the body is automatically parsed and stored in a buffer, this is memory heavy.

// To receive chunked response, pass in a callback as last parameter to the constructor.

HttpRequest* get_req = new HttpRequest(network, HTTP_GET, get_uri.c_str());

get_req->set_header("Authorization", ACCESS_CODE);

HttpResponse* get_res = get_req->send();

if (!get_res) {

DEBUG_PRINT("HttpRequest failed (error code %d)\n", get_req->get_error());

return 1;

}

DEBUG_PRINT("\n----- HTTP GET response -----\n");

delete get_req;

return 0;

}

int send_hvc_info(NetworkInterface *network)

{

/* No face detect */

if (result_hvcp2_fd_cnt == 0) {

/* Do nothing */

} else {

for (uint32_t i = 0; i < result_hvcp2_fd_cnt; i++) {

/* picojson-object clear */

o_fd[i].clear();

o_fr[i].clear();

o_scr[i].clear();

/* Type */

o_fd[i]["RecordType"] = picojson::value((string)"HVC-P2(face)");

o_fd[i]["id"] = picojson::value((string) "0001-0005");

/* Age */

o_fd[i]["Age"] = picojson::value((double)result_hvcp2_fd[i].age.age);

/* Gender */

o_fd[i]["Gender"] = picojson::value((double)result_hvcp2_fd[i].gender.gender);

/* FaceRectangle */

o_fr[i]["Top"] = picojson::value((double)result_hvcp2_fd[i].face_rectangle.MinY);

o_fr[i]["Left"] = picojson::value((double)result_hvcp2_fd[i].face_rectangle.MinX);

o_fr[i]["Width"] = picojson::value((double)result_hvcp2_fd[i].face_rectangle.Width);

o_fr[i]["Height"] = picojson::value((double)result_hvcp2_fd[i].face_rectangle.Height);

/* Scores */

o_scr[i]["Neutral"] = picojson::value((double)result_hvcp2_fd[i].scores.score_neutral);

o_scr[i]["Anger"] = picojson::value((double)result_hvcp2_fd[i].scores.score_anger);

o_scr[i]["Happiness"] = picojson::value((double)result_hvcp2_fd[i].scores.score_happiness);

o_scr[i]["Surprise"] = picojson::value((double)result_hvcp2_fd[i].scores.score_surprise);

o_scr[i]["Sadness"] = picojson::value((double)result_hvcp2_fd[i].scores.score_sadness);

/* insert 2 structures */

o_fd[i]["FaceRectangle"] = picojson::value(o_fr[i]);

o_fd[i]["Scores"] = picojson::value(o_scr[i]);

}

}

/* No body detect */

if (result_hvcp2_bd_cnt == 0) {

/* Do nothing */

} else {

for (uint32_t i = 0; i < result_hvcp2_bd_cnt; i++) {

/* picojson-object clear */

o_bd[i].clear();

/* Type */

o_bd[i]["RecordType"] = picojson::value((string)"HVC-P2(body)");

o_bd[i]["id"] = picojson::value((string)"0001-0006");

/* BodyRectangle */

o_bd[i]["Top"] = picojson::value((double)result_hvcp2_bd[i].body_rectangle.MinY);

o_bd[i]["Left"] = picojson::value((double)result_hvcp2_bd[i].body_rectangle.MinX);

o_bd[i]["Width"] = picojson::value((double)result_hvcp2_bd[i].body_rectangle.Width);

o_bd[i]["Height"] = picojson::value((double)result_hvcp2_bd[i].body_rectangle.Height);

}

}

DEBUG_PRINT("Face detect count : %d\n", result_hvcp2_fd_cnt);

DEBUG_PRINT("Body detect count : %d\n", result_hvcp2_bd_cnt);

http_resp_time.reset();

http_resp_time.start();

/* send data */

if (result_hvcp2_fd_cnt == 0) {

/* No need to send data */

} else {

for (uint32_t i = 0; i < result_hvcp2_fd_cnt; i++) {

iot_put(network, o_fd[i]);

}

}

if (result_hvcp2_bd_cnt == 0) {

/* No need to send data */

} else {

for (uint32_t i = 0; i < result_hvcp2_bd_cnt; i++) {

iot_put(network, o_bd[i]);

}

}

DEBUG_PRINT("iot_put() Response time:%dms\n", http_resp_time.read_ms());

return 0;

}

void iot_ready_task(void)

{

/* Initialize http */

NetworkInterface *network = easy_connect(true);

MBED_ASSERT(network);

#ifdef ENABLED_NTP

// Generate the string indicating the date and hour specified for PUT request

NTPClient ntp;

time_t ctTime;

struct tm *pnow;

NTPResult ret;

ret = ntp.setTime("ntp.nict.jp");

MBED_ASSERT( ret==0 );

ctTime = time(NULL);

#endif // Enabled_NTP

while (1) {

semaphore_wait_ret = iot_ready_semaphore.wait();

MBED_ASSERT(semaphore_wait_ret != -1);

/* send hvc-p2 data */

http_resp_time.reset();

http_resp_time.start();

send_hvc_info(network);

DEBUG_PRINT("send_hvc_info() Response time:%dms\n", http_resp_time.read_ms());

iot_ready_semaphore.release();

Thread::wait(WAIT_TIME);

};

}